The idea behind task-based usability testing is that every website or application’s user experience is comprised of a series of steps along the user’s journey, each of which must be optimized to guide the user to his or her end goal.

Each task, then, while part of a larger overall experience, is also a unique opportunity to create an intuitive and seamless interaction for the user. It is in finding where we fail to do this that we are able to improve our websites and applications.

Quantifying task usability

So if tasks are the building blocks of usability testing, is there a way to measure the success of those tasks? How can we think quantitatively about the unique usability of each task we ask our testers to complete?

Qualitative feedback identifying problem areas is an invaluable output of user testing, but it does not allow us to compare usability across tasks and see the relative weight users assign to the problems (or lack of problems) they faced in each separate task.

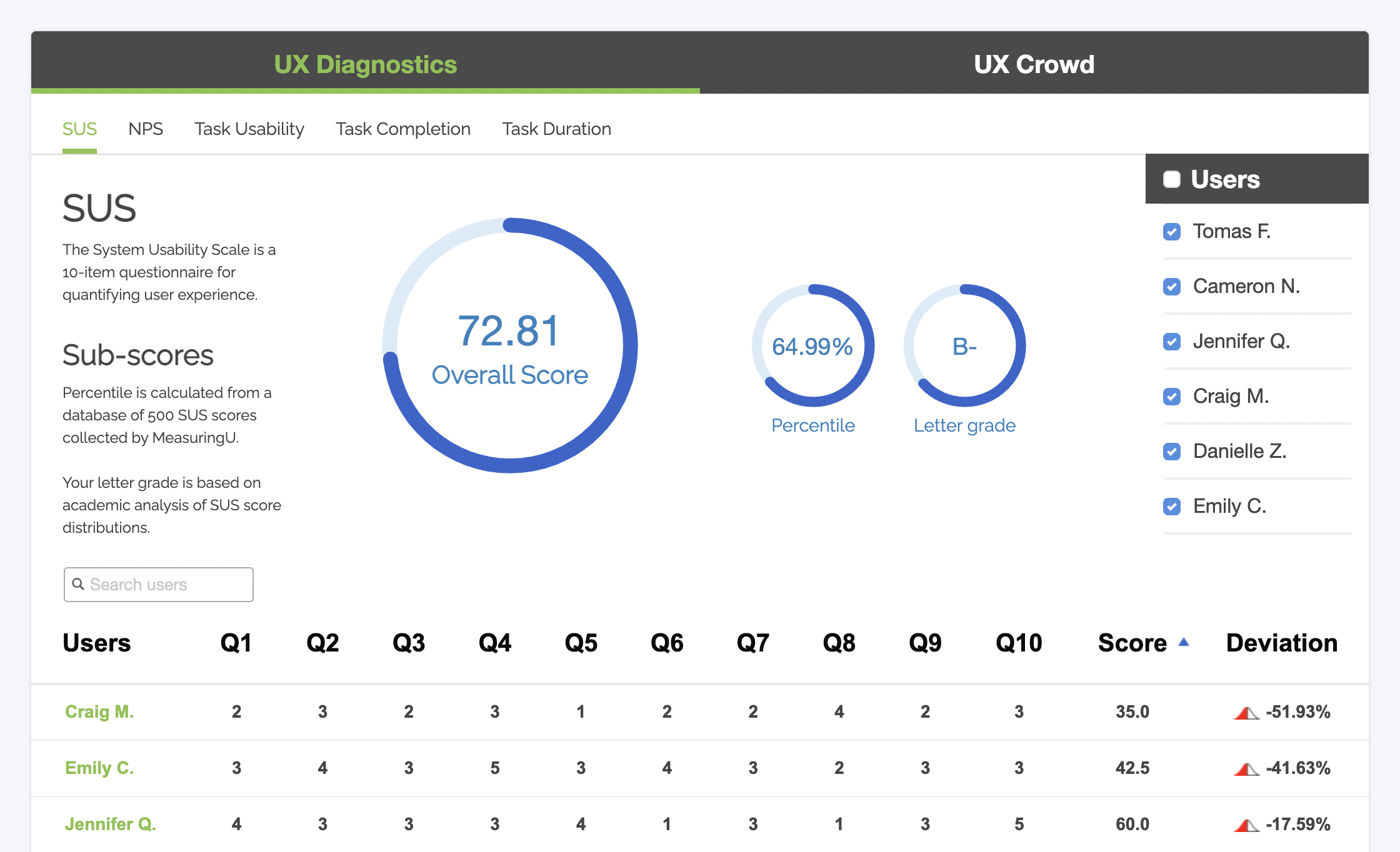

With a general usability metric like the System Usability Scale, researchers can complement qualitative-type feedback with a way to measure and quantify overall system satisfaction and usability; but even a short 10-item questionnaire like SUS would quickly become burdensome for testers when applied repeatedly after every task.

Measurable user testing metrics like number of clicks or time taken per task are useful in getting a handle on the effectiveness and simplicity of tasks, and are built into the bones of usability testing anyways, but are not comprehensive. They require a great deal of extrapolation, and are better suited for benchmarking and setting targets.

The Single Ease Question (SEQ)

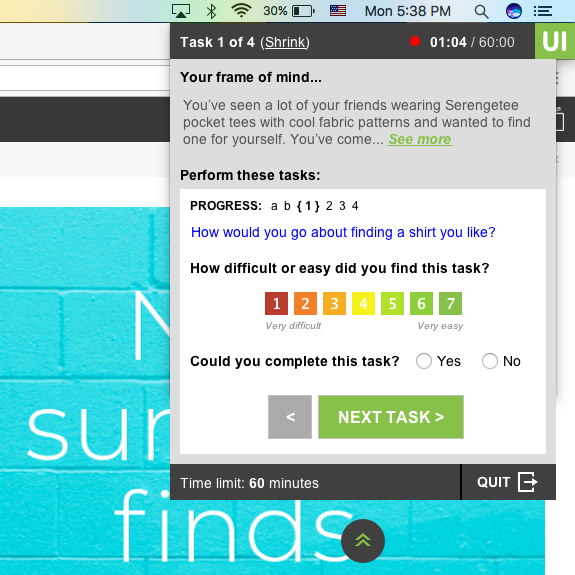

A more tightly focused method, which does not pile significant amounts of time, effort, or complexity onto the tester (or the researcher), is the Single Ease Question: the SEQ.

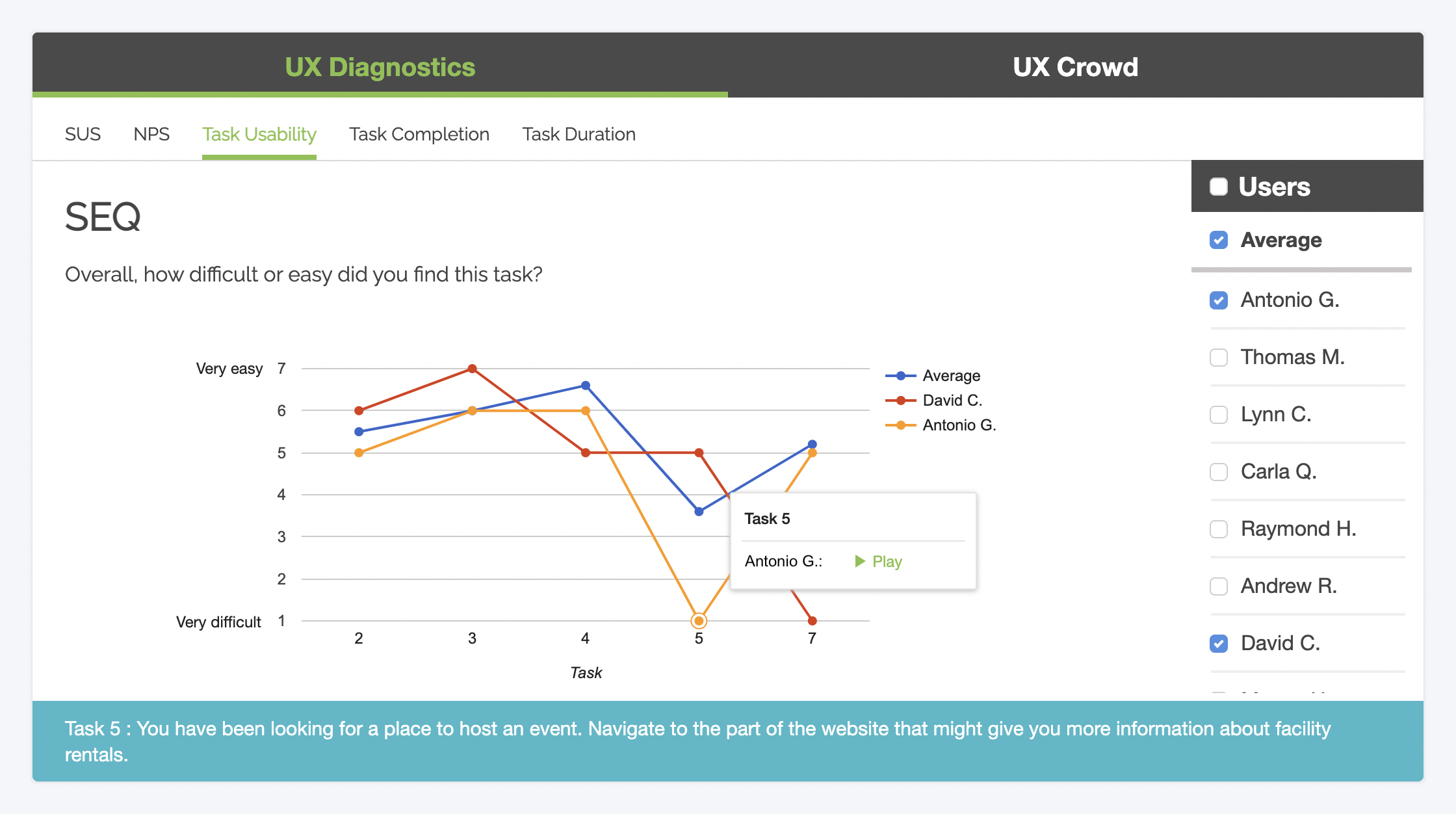

Like SUS, PSSUQ, and other psychometric survey models, the Single Ease Question uses a Likert Scale-style response system; but the similarities stop there. As its name implies, SEQ is just one question: “How difficult or easy did you find the task?”

The response scale has 7 points, with 1 representing “Very difficult” and 7 representing “Very easy.”

This 7-point scale allows room for plenty of nuance and a diversity of responses, while still preserving the ‘only-one-question’ simplicity of the SEQ.

The Single Ease Question has been found to be just as effective a measure as other, longer task usability scales, and also correlates (though not especially strongly) with metrics like task duration time and completion rate.

In addition to its usefulness as a quantification tool, the SEQ can provide actionable diagnostic information with the inclusion of one more query: “Why?”

MeasuringU, experts on quantitative UX research, recommends asking testers the reason behind their ranking for scores of 5 or less (on a scale of 1-7) to get to the root of sub-par performances. Though this doubles the length of this short survey, the critical value it adds is in tying feedback to a causal relationship with specific problems that you can then act on to improve your website.

Interpreting and expanding the SEQ with UX Diagnostics

As part of our effort to provide a full range of both qualitative and quantitative data perspectives on UX research and usability testing, Trymata has added the SEQ to our UX Diagnostics toolbox to help you understand the usability not only of your website as a whole, but also of the individual steps on the user’s journey through it.

Besides the task usability data from the SEQ, this quantitative toolbox also includes your choice of psychometric surveys (such as SUS), the NPS (Net Promoter Score), and task completion rates and duration times.

With UX Diagnostics, you can easily see (and quantify) the journey of each user, task by task, as well as the average experience overall. It’s a great way to home in on the most interesting users in your study with the most critical feedback, before you ever even watch a video!

Built-in SEQ functionality is included with our Team and Enterprise plans, as part of the UX Diagnostics feature suite. Get started with measuring the usability of your website by clicking below!